Social Media Platforms Struggle with AI Content Transparency as Optional Labels Fall Short

The ongoing challenge of identifying artificial intelligence-generated content on social media has taken another tentative step forward, though I believe it’s still woefully inadequate. Meta’s latest experiment with voluntary disclosure labels represents the kind of half-measure that sounds good in press releases but fails to address the real problem plaguing digital platforms today.

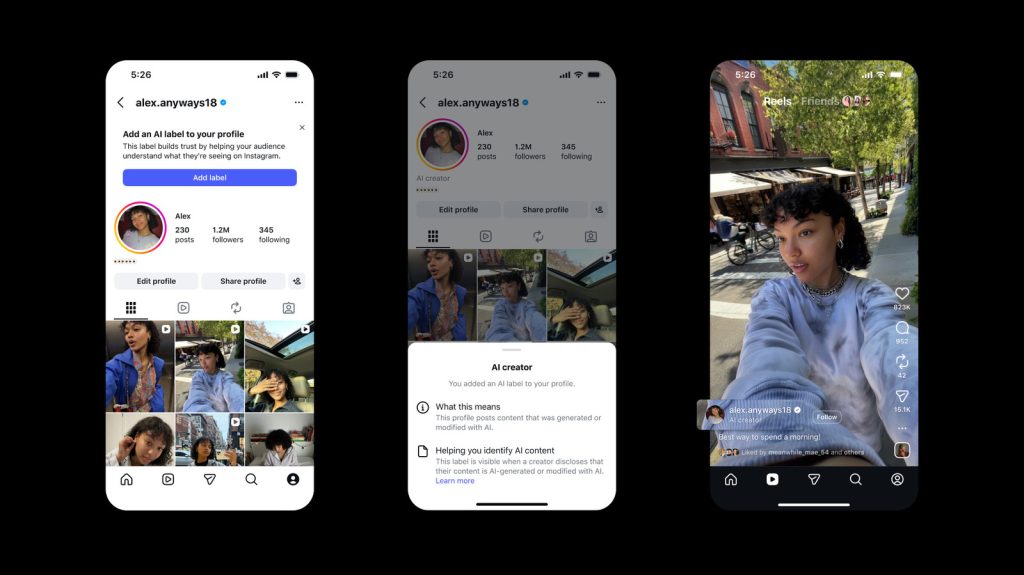

The new feature allows content creators to voluntarily mark their profiles with labels indicating they produce AI-generated or AI-modified material. These badges appear both on user profiles and alongside individual posts, stating clearly that the content was created or altered using artificial intelligence tools. This represents a more direct approach compared to the company’s existing ambiguous indicators that merely suggest content “may” have been AI-generated.

Why Voluntary Compliance Doesn’t Work

Here’s where I think the platform fundamentally misses the mark: making these labels optional is like asking people to voluntarily admit when they’re speeding. The creators most likely to mislead audiences with undisclosed AI content are precisely those who won’t voluntarily label themselves. This system primarily benefits honest creators who were probably being transparent anyway, while doing little to catch bad actors.

The company’s existing detection methods are admittedly inconsistent, as highlighted by recent oversight board criticism. Without reliable automated detection capabilities, platforms are essentially operating on an honor system that serves neither creators nor consumers effectively.

Who Benefits and Who Doesn’t

Legitimate AI artists and content creators who want to build trust with their audiences will likely embrace these labels. For them, transparency can actually be a selling point, demonstrating their innovative use of technology. Educational content creators and those experimenting with AI tools may also find value in clearly marking their work.

However, this approach does nothing for the average social media user trying to distinguish between authentic and artificial content. If anything, it may create a false sense of security, where unlabeled content appears more trustworthy simply because it lacks an AI disclaimer.

The Broader Industry Challenge

This incremental approach reflects the broader struggle across social media platforms to balance innovation with transparency. While encouraging voluntary disclosure sounds reasonable, I believe it’s insufficient given the rapid advancement of AI generation tools and their increasing sophistication.

The real issue isn’t just about labeling – it’s about the fundamental shift in how we consume and trust digital content. As AI-generated material becomes indistinguishable from human-created content, platforms need more robust solutions than optional badges.

What concerns me most is that this voluntary system may actually delay the development of more effective mandatory disclosure requirements. By implementing a feel-good solution that appears to address transparency concerns, platforms can avoid the harder work of building comprehensive detection systems or establishing industry-wide standards.

Until social media companies are willing to make AI disclosure mandatory and invest seriously in detection technology, users will continue navigating an increasingly murky landscape where authentic and artificial content blend together without clear boundaries.