Social Media Giants Deploy Controversial AI Technology to Scan User Photos for Age Detection

The latest development in social media age verification has sparked both praise and concern as major platforms implement sophisticated artificial intelligence systems that analyze physical characteristics in user-uploaded content. This technology represents a significant shift in how companies approach the challenge of keeping underage users off adult-oriented platforms.

How the Technology Works

The AI system examines visual elements in photos and videos, focusing on physical indicators such as height proportions and skeletal development patterns. Combined with contextual analysis of text content—including references to school grades, birthday celebrations, and social interactions—these systems create comprehensive age assessment profiles.

What’s particularly noteworthy is the explicit distinction being made from facial recognition technology. The focus remains on general physical development markers rather than identifying specific individuals, which I believe is a crucial differentiation that addresses some privacy concerns while still raising others.

Implementation and Consequences

Currently being tested in select regions before broader deployment, the system automatically flags accounts suspected of belonging to users under 13. When flagged, accounts face immediate deactivation, requiring age verification documentation for reactivation. Failure to provide adequate proof results in permanent account deletion.

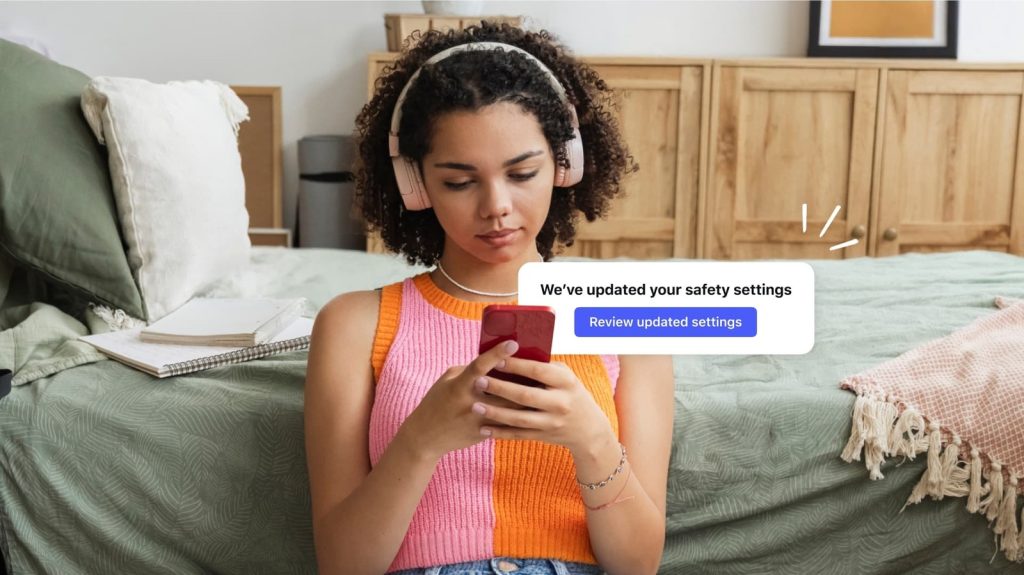

For users aged 13-15, the technology facilitates automatic enrollment in specialized teen accounts featuring enhanced parental controls and safety measures. This tiered approach is expanding across multiple platforms and geographic regions, including Brazil, European Union countries, and the United States.

My Take on This Development

This technology raises fascinating questions about digital privacy versus child safety. While I appreciate the genuine effort to protect younger users, the implications of AI systems analyzing our physical characteristics feel uncomfortably invasive. The technology seems most beneficial for parents genuinely concerned about their children’s online safety, but it’s problematic for privacy advocates and anyone uncomfortable with algorithmic body analysis.

The approach will likely prove most effective for obvious cases—clearly underage users who shouldn’t be on these platforms anyway. However, I’m skeptical about its accuracy for edge cases, particularly teenagers who may appear older or younger than their actual age. The potential for false positives could unfairly impact legitimate users.

Regulatory Pressure and Future Implications

This technological rollout comes amid increasing regulatory scrutiny, particularly from European authorities investigating potential violations of digital services legislation. The pressure from multiple jurisdictions suggests this is just the beginning of more aggressive age verification measures across the industry.

From my perspective, this represents a broader trend toward automated content moderation that I find both necessary and troubling. While protecting children online is undeniably important, the normalization of AI systems analyzing our physical characteristics sets a precedent that extends far beyond age verification.

The real beneficiaries here are likely the platforms themselves, as they can demonstrate compliance efforts to regulators while potentially reducing liability. Parents seeking better protection for their children may also benefit, though the effectiveness remains to be proven. The losers are privacy-conscious users and potentially legitimate teenage users who may face unnecessary scrutiny or account restrictions.